Category: SAN

How to use Adobe Flash Player after its End of Life — absolutely free

***NOW UPDATED with Apple MacOS instructions, in addition to Microsoft Windows***

***Also updated with the solution to the mms.cfg file not working due to the UTF-8 bug***

You may have seen plenty of announcements over the past few years about Adobe Flash coming to the end of life. Various browser manufacturers announced they will disable Flash. Microsoft announced they will uninstall Flash from Windows using a Windows Update (although only the Flash that came automatically with Windows, NOT user-installed Flash). Apple completely disabled Flash in Safari. Below is the dreaded Flash End of Life logo that you will see once Flash is finally turned off:

Yes, I agree with Steve Jobs — Flash is buggy and not secure. However, there are many IT manufacturers out there that used Flash to build their management software interfaces. Some common examples are VMware vSphere, Horizon, and HPE CommandView. That management software is not going away, even though most of it is older. In fact, some of these Flash-managed devices will be there for the next 10 years. So, what can the desperate IT administrator do to manage his or her devices?

Adobe sends users for extended Flash support to a company called Harman. HPE charges money for older CommandView support. Do not pay any money to these companies to use Flash.

Preparation

I am not recommending Chrome or Edge browsers for the below solution because they will auto-update and newer versions will not support Flash at all. Further, turning off auto-update in Chrome and Edge is difficult.

Here are 3 methods to get Flash running on your favorite website. Windows methods assume 64-bit Operating systems. If you want to try 32-bit Windows, the files are available, but the functionality has not been tested (although it will probably work). All the files talked about in these methods are downloadable below:

firefox-flash-end-solution-versions.zip_.pdf — Right click on the link and choose “Save Link As” or “Download Linked File As”. Save the file to your computer. Unhide file extensions. Remove _.pdf from the end of the name and Unzip/ExtractAll the file.

The file contains:

policies.json

Firefox Setup 78.6.0esr-64bit.exe

Firefox Setup 78.6.0esr-32bit.exe

Firefox 78.6.0esr.dmg

flash-eol-versions.zip_-1.pdf — Right click on the link and choose “Save Link As” or “Download Linked File As”. Save the file to your computer. Unhide file extensions. Remove _-1.pdf from the end of the name and Unzip/ExtractAll the file.

The file contains:

mms.cfg

Flash player for Firefox and Win7 – use this for Solution: install_flash_player.exe

Flash for Safari and Firefox – Mac: install_flash_player_osx.dmg

Flash for Opera and Chromium – Mac: install_flash_player_osx_ppapi.dmg

Flash Player for Chromium and Opera browsers: install_flash_player_ppapi.exe

Flash Player for IE active x: install_flash_player_ax.exe

Flash Player Beta 32 bit – May 14-2020: flashplayer_32_sa.exe flashplayer_32_sa.dmg

flash_player_32_0_admin_guide.pdf

Method 1 — Microsoft Windows, if you have Internet Explorer browser and Flash already installed

This method applies to many older Windows Operating systems like Server 2008, 2012, 2016 and Windows 7 and even older Windows 10. It assumes a 64-bit operating system.

- Do NOT upgrade Internet Explorer to the Microsoft Edge browser.

- Set Internet Explorer to be the default browser in Default Programs.

- Download the mms.cfg file.

- Open the mms.cfg file with Notepad.

- Edit the URL on the right of the Equals sign with an address of the Flash website or file that you need.

- Ex. AllowListUrlPattern=https://localhost/admin/

- If you need additional websites, place them on the next lines, like in this example.

- AllowListUrlPattern=https://localhost/admin/

- AllowListUrlPattern=http://testwebsite.com/

- AllowListUrlPattern=*://*.finallystopflash.com/

- Save mms.cfg file on the desktop.

- Important: if you did not use my file, but you are creating the file yourself, makes sure in Notepad Save As dialog, you select “All Files” as the type, and “UTF-8” as the Encoding.

- Copy the mms.cfg file into the following directory: C:\Windows\SysWOW64\Macromed\Flash\

- That disables Flash updates and allows to use Flash on specified websites.

- If you don’t see this directory, it means Flash is not installed and you need to use Method 2 instead.

- Restart the Internet Explorer browser.

- Go to your website.

- This will open Internet Explorer with Flash functional.

Method 2 — Microsoft Windows, if you don’t have Internet Explorer and/or Flash installed

This method applies to almost any Windows machine. It assumes a 64-bit operating system.

- If you already have another version of Firefox installed, uninstall it.

- Download the “Firefox Setup 78.6.0esr-64bit.exe” and “policies.json” files. This Firefox installer is the Enterprise version (what you need).

- Install Firefox ESR, but do NOT open it, or if it opens, close right away.

- In the “C:\Program Files\Mozilla Firefox\” directory, create a folder called “distribution”

- Put “policies.json” file into the folder “distribution” — this disables automatic Firefox updates.

- Start Firefox ESR.

- Go to URL: about:policies

- Check that “DisableAppUpdate” policy is there and it says “True”.

- Set Firefox to be the default browser in Default Programs.

- Download “Flash player for Firefox and Win7 – use this for Solution: install_flash_player.exe” and “mms.cfg”.

- Double click on the install_flash_player.exe to install Flash for Firefox. Click all Next prompts.

- If you are prompted to choose “Update Flash Player Preferences”, select “Never Check for Updates”.

- Open mms.cfg file with Notepad

- Edit the URL on the right of the Equals sign with an address of the Flash website or file that you need.

- Ex. AllowListUrlPattern=https://localhost/admin/

- If you need additional websites, place them on the next lines, like in these examples:

- AllowListUrlPattern=https://localhost/admin/

- AllowListUrlPattern=http://testwebsite.com/

- AllowListUrlPattern=*://*.finallystopflash.com/

- Save mms.cfg file on the desktop.

- Important: if you did not use my file, but you are creating the file yourself, makes sure in Notepad Save As dialog, you select “All Files” as the type, and “UTF-8” as the Encoding.

- Copy the “mms.cfg” file into the following directory: C:\Windows\SysWOW64\Macromed\Flash\

- That disables Flash updates and allows to use Flash on specified websites.

- Restart Firefox ESR.

- When going to the flash website you specified, click on the big logo in the middle, then “Allow”.

Method 3 — Apple MacOS

This method applies to almost any MacOS version.

- If you already have another version of Firefox installed, uninstall it.

- Download “Firefox 78.6.0esr.dmg” and “policies.json” files. This Firefox ESR for Mac installer is the Enterprise version (what you need).

- Open the DMG file. Drag the Firefox ESR icon to the Applications folder, which installs it on the Mac. Do NOT open Firefox ESR yet.

- Open the Terminal application.

- Type the following and press Enter (start typing from xattr).

- xattr -r -d com.apple.quarantine /Applications/Firefox.app

- This allows Firefox customization without corrupting the application.

- xattr -r -d com.apple.quarantine /Applications/Firefox.app

- Go to the Applications folder.

- Right click on the Firefox.app application and select “Show Package Contents”.

- Go to Contents>Resources folder and when there create a folder called “distribution”.

- Put “policies.json” file into the folder “distribution” — this disables automatic Firefox updates.

- Start Firefox ESR.

- Go to URL: about:policies

- Check that “DisableAppUpdate” policy is there and it says “True”.

- Download “Flash for Safari and Firefox – Mac: install_flash_player_osx.dmg” and “mms.cfg”.

- Double click on the install_flash_player_osx.dmg to mount the disk. Double click the installer to install Flash for Firefox.

- When asked to choose on “Update Flash Player Preferences”, select “Never Check for Updates (not recommended)”.

- Place the mms.cfg file on the Desktop. Open mms.cfg file with TextEdit.

- Edit the URL on the right of the Equals sign with an address of the Flash website or file that you need.

- Ex. AllowListUrlPattern=https://localhost/admin/

- If you need additional websites, place them on the next lines, like in these examples:

- AllowListUrlPattern=https://localhost/admin/

- AllowListUrlPattern=http://testwebsite.com/

- AllowListUrlPattern=*://*.finallystopflash.com/

- Save mms.cfg file to the Desktop. Copy the mms.cfg file.

- Paste the “mms.cfg” file into the following directory:

- /Library/Application Support/Macromedia/ (Mac Hard Drive>Library>Application Support>Macromedia)

- If there is already an existing mms.cfg file in there, Replace it.

- That disables Flash updates and allows to use Flash on specified websites.

- Restart Firefox ESR for Mac.

- When going to the flash website you specified, click on the big logo in the middle, then “Allow”.

References

https://support.mozilla.org/en-US/questions/1283061

https://community.adobe.com/t5/flash-player/adobe-flash-availability-after-2020/td-p/10929047?page=1

https://support.mozilla.org/en-US/kb/deploying-firefox-customizations-macos

VMware vSphere misidentifies local or SAN-attached SSD drives as non-SSD

Symptom:

You are trying to configure Host Cache Configuration feature in VMware vSphere. The Host Cache feature will swap memory to a local SSD drive, if vSphere encounters memory constraints. It is similar to the famous Windows ReadyBoost.

Host Cache requires an SSD drive, and ESXi will detect the drive type as SSD. If the drive type is NOT SSD, Host Cache Configuration will not be allowed.

However, even though you put in some local SSD drives on the ESXi host, and also have an SSD drive on your storage array coming through, ESXi refuses to recognize the drives as SSD type, and thus refuses to let you use Host Cache.

Solution:

Apply some CLI commands to force ESXi into understanding that your drive is really SSD. Then reconfigure your Host Cache.

Instructions:

Look up the name of the disk and its naa.xxxxxx number in VMware GUI. In our example, we found that the disks that are not properly showing as SSD are:

- Dell Serial Attached SCSI Disk (naa.600508e0000000002edc6d0e4e3bae0e) — local SSD

- DGC Fibre Channel Disk (naa.60060160a89128005a6304b3d121e111) — SAN-attached SSD

Check in the GUI that both show up as non-SSD type.

SSH to ESXi host. Each ESXi host will require you to look up the unique disk names and perform the commands below separately, once per host.

Type the following commands, and find the NAA numbers of your disks.

In the examples below, the relevant information is highlighted in RED.

The commands you need to type are BOLD.

The comments on commands are in GREEN.

———————————————————————————————-

~ # esxcli storage nmp device list

naa.600508e0000000002edc6d0e4e3bae0e

Device Display Name: Dell Serial Attached SCSI Disk (naa.600508e0000000002edc6d0e4e3bae0e)

Storage Array Type: VMW_SATP_LOCAL

Storage Array Type Device Config: SATP VMW_SATP_LOCAL does not support device configuration.

Path Selection Policy: VMW_PSP_FIXED

Path Selection Policy Device Config: {preferred=vmhba0:C1:T0:L0;current=vmhba0:C1:T0:L0}

Path Selection Policy Device Custom Config:

Working Paths: vmhba0:C1:T0:L0

naa.60060160a89128005a6304b3d121e111

Device Display Name: DGC Fibre Channel Disk (naa.60060160a89128005a6304b3d121e111)

Storage Array Type: VMW_SATP_ALUA_CX

Storage Array Type Device Config: {navireg=on, ipfilter=on}{implicit_support=on;explicit_support=on; explicit_allow=on;alua_followover=on;{TPG_id=1,TPG_state=ANO}{TPG_id=2,TPG_state=AO}}

Path Selection Policy: VMW_PSP_RR

Path Selection Policy Device Config: {policy=rr,iops=1000,bytes=10485760,useANO=0;lastPathIndex=1: NumIOsPending=0,numBytesPending=0}

Path Selection Policy Device Custom Config:

Working Paths: vmhba2:C0:T1:L0

naa.60060160a891280066fa0275d221e111

Device Display Name: DGC Fibre Channel Disk (naa.60060160a891280066fa0275d221e111)

Storage Array Type: VMW_SATP_ALUA_CX

Storage Array Type Device Config: {navireg=on, ipfilter=on}{implicit_support=on;explicit_support=on; explicit_allow=on;alua_followover=on;{TPG_id=1,TPG_state=ANO}{TPG_id=2,TPG_state=AO}}

Path Selection Policy: VMW_PSP_RR

Path Selection Policy Device Config: {policy=rr,iops=1000,bytes=10485760,useANO=0;lastPathIndex=1: NumIOsPending=0,numBytesPending=0}

Path Selection Policy Device Custom Config:

Working Paths: vmhba2:C0:T1:L3

———————————————————————————————-

Note that the Storage Array Type is VMW_SATP_LOCAL for the local SSD drive and VMW_SATP_ALUA_CX for the SAN-attached SSD drive.

Now we will check to see if in CLI, ESXi reports the disks as SSD or non-SSD for both disks. Make sure to specify your own NAA number when typing the command.

———————————————————————————————-

~ # esxcli storage core device list –device=naa.600508e0000000002edc6d0e4e3bae0e

naa.600508e0000000002edc6d0e4e3bae0e

Display Name: Dell Serial Attached SCSI Disk (naa.600508e0000000002edc6d0e4e3bae0e)

Has Settable Display Name: true

Size: 94848

Device Type: Direct-Access

Multipath Plugin: NMP

Devfs Path: /vmfs/devices/disks/naa.600508e0000000002edc6d0e4e3bae0e

Vendor: Dell

Model: Virtual Disk

Revision: 1028

SCSI Level: 6

Is Pseudo: false

Status: degraded

Is RDM Capable: true

Is Local: false

Is Removable: false

Is SSD: false

Is Offline: false

Is Perennially Reserved: false

Thin Provisioning Status: unknown

Attached Filters:

VAAI Status: unknown

Other UIDs: vml.0200000000600508e0000000002edc6d0e4e3bae0e566972747561

~ # esxcli storage core device list –device=naa.60060160a89128005a6304b3d121e111

naa.60060160a89128005a6304b3d121e111

Display Name: DGC Fibre Channel Disk (naa.60060160a89128005a6304b3d121e111)

Has Settable Display Name: true

Size: 435200

Device Type: Direct-Access

Multipath Plugin: NMP

Devfs Path: /vmfs/devices/disks/naa.60060160a89128005a6304b3d121e111

Vendor: DGC

Model: VRAID

Revision: 0430

SCSI Level: 4

Is Pseudo: false

Status: on

Is RDM Capable: true

Is Local: false

Is Removable: false

Is SSD: false

Is Offline: false

Is Perennially Reserved: false

Thin Provisioning Status: yes

Attached Filters: VAAI_FILTER

VAAI Status: supported

Other UIDs: vml.020000000060060160a89128005a6304b3d121e111565241494420

———————————————————————————————-

Now we will add a rule to enable SSD on those 2 disks. Make sure to specify your own NAA number when typing the commands.

———————————————————————————————-

~ # esxcli storage nmp satp rule add –satp VMW_SATP_LOCAL –device naa.600508e0000000002edc6d0e4e3bae0e –option=enable_ssd

~ # esxcli storage nmp satp rule add –satp VMW_SATP_ALUA_CX –device naa.60060160a89128005a6304b3d121e111 –option=enable_ssd

———————————————————————————————-

Next, we will check to see that the commands took effect for the 2 disks.

———————————————————————————————-

~ # esxcli storage nmp satp rule list | grep enable_ssd

VMW_SATP_ALUA_CX naa.60060160a89128005a6304b3d121e111 enable_ssd user

VMW_SATP_LOCAL naa.600508e0000000002edc6d0e4e3bae0e enable_ssd user

———————————————————————————————-

Then, we will run storage reclaim commands on those 2 disks. Make sure to specify your own NAA number when typing the commands.

———————————————————————————————-

~ # esxcli storage core claiming reclaim -d naa.60060160a89128005a6304b3d121e111

~ # esxcli storage core claiming reclaim -d naa.600508e0000000002edc6d0e4e3bae0e

Unable to unclaim path vmhba0:C1:T0:L0 on device naa.600508e0000000002edc6d0e4e3bae0e. Some paths may be left in an unclaimed state. You will need to claim them manually using the appropriate commands or wait for periodic path claiming to reclaim them automatically.

———————————————————————————————-

If you get the error message above, that’s OK. It takes time for the reclaim command to work.

You can check in the CLI by running the command below and looking for “Is SSD: false”

———————————————————————————————-

~ # esxcli storage core device list –device=naa.600508e0000000002edc6d0e4e3bae0e

naa.600508e0000000002edc6d0e4e3bae0e

Display Name: Dell Serial Attached SCSI Disk (naa.600508e0000000002edc6d0e4e3bae0e)

Has Settable Display Name: true

Size: 94848

Device Type: Direct-Access

Multipath Plugin: NMP

Devfs Path: /vmfs/devices/disks/naa.600508e0000000002edc6d0e4e3bae0e

Vendor: Dell

Model: Virtual Disk

Revision: 1028

SCSI Level: 6

Is Pseudo: false

Status: degraded

Is RDM Capable: true

Is Local: false

Is Removable: false

Is SSD: false

Is Offline: false

Is Perennially Reserved: false

Thin Provisioning Status: unknown

Attached Filters:

VAAI Status: unknown

Other UIDs: vml.0200000000600508e0000000002edc6d0e4e3bae0e566972747561

———————————————————————————————-

Check in the vSphere Client GUI. Rescan storage.

If it still does NOT say SSD, reboot the ESXi host.

Then look in the GUI and rerun the command below.

———————————————————————————————-

~ # esxcli storage core device list —device=naa.60060160a89128005a6304b3d121e111

naa.60060160a89128005a6304b3d121e111

Display Name: DGC Fibre Channel Disk (naa.60060160a89128005a6304b3d121e111)

Has Settable Display Name: true

Size: 435200

Device Type: Direct-Access

Multipath Plugin: NMP

Devfs Path: /vmfs/devices/disks/naa.60060160a89128005a6304b3d121e111

Vendor: DGC

Model: VRAID

Revision: 0430

SCSI Level: 4

Is Pseudo: false

Status: on

Is RDM Capable: true

Is Local: false

Is Removable: false

Is SSD: true

Is Offline: false

Is Perennially Reserved: false

Thin Provisioning Status: yes

Attached Filters: VAAI_FILTER

VAAI Status: supported

Other UIDs: vml.020000000060060160a89128005a6304b3d121e111565241494420

———————————————————————————————-

If it still does NOT say SSD, you need to wait. Eventually, the command works and displays as SSD in CLI and the GUI.

More Information:

See the article below:

Collateral for my session at the HP Discover 2014 conference

Thank you to the 260 people who attended my session and filled out the survey!

I am very grateful that you keep coming to hear what I have to say and hope to be back next year.

My presentation is called “TB3306 – Tips and tricks on building VMware vSphere 5.5 with BladeSystem, Virtual Connect, and HP 3PAR StoreServ storage”

Returning for the sixth year in a row, this tips-and-techniques session is for administrators and consultants who want to implement VMware ESXi 5.5 (vSphere) on HP c-Class BladeSystem, Virtual Connect, and HP 3PAR StoreServ storage. New topics will include the auto-deployment of domain configurations and Single Root I/O Virtualization (SR-IOV) for bypassing vSwitches. The session will focus on real-world examples of VMware and HP best practices. For example, you will learn how to load-balance SAN paths; make Virtual Connect really “connect” to Cisco IP switches in a true active/active fashion; configure VLANs for the Virtual Connect modules and virtual switches; solve firmware and driver problems. In addition, you will receive tips on how to make sound design decisions for iSCSI vs. Fibre Channel, and boot from SAN vs. local boot. To get the most from this session, we recommend attendees have a basic understanding of VMware ESX, HP c-Class BladeSystem, and Virtual Connect.

Here are the collateral files for the session:

Slides:

Use #HPtrick hashtag to chat with me on Twitter:

June 16, 2014 — Monday, 2-3 pm Eastern Standard Time (11 am – 12 pm Pacific Standard Time).

Speaking at the HP Discover 2014 conference.

This is my 6th year to have the honor of speaking at the HP Discover conference.

Thank you to past attendees who rate my session high, and to HP staff who pick my session.

Here is the official HP link to the session:

https://h30496.www3.hp.com/connect/sessionDetail.ww?SESSION_ID=3306

Thank you to Morgan O’Leary of VMware for highlighting my session on the official VMware company blog:

http://blogs.vmware.com/vmware/2014/06/register-now-hp-discover-las-vegas-2014.html

Session time:

Wednesday, June 11, 2014

9 am – 10 am Pacific Standard Time (12:00 PM – 1 PM Eastern Standard Time).

Room: Lando 4202.

My presentation number is TB3306 and it is called “Tips and tricks on building VMware vSphere 5.5 with BladeSystem, Virtual Connect, and HP 3PAR StoreServ storage”

This tips-and-techniques session is for administrators and consultants who want to implement VMware ESXi 5.5 (vSphere) on HP c-Class BladeSystem, Virtual Connect, and HP 3PAR StoreServ storage. New topics will include the auto-deployment of domain configurations and Single Root I/O Virtualization (SR-IOV) for bypassing vSwitches. The session will focus on real-world examples of VMware and HP best practices. For example, you will learn how to load-balance SAN paths; make Virtual Connect really “connect” to Cisco IP switches in a true active/active fashion; configure VLANs for the Virtual Connect modules and virtual switches; solve firmware and driver problems. In addition, you will receive tips on how to make sound design decisions for iSCSI vs. Fibre Channel, and boot from SAN vs. local boot. To get the most from this session, we recommend attendees have a basic understanding of VMware ESX, HP c-Class BladeSystem, and Virtual Connect.

You will be able to download the slides from my session the evening of June 12 on this blog.

Please live Tweet points you find interesting during the session, using the following hashtag:

#HPtrick

Look for suggested tricks in the slides.

In addition, use #HPtrick hashtag to chat with me on Twitter:

June 16, 2014 — Monday, 2-3 pm Eastern Standard Time (11 am – 12 pm Pacific Standard Time).

If you would like to attend the HP conference in person, please register:

https://h30496.www3.hp.com/portal/newreg.ww

Then, choose session TB3306 in the Session Scheduler:

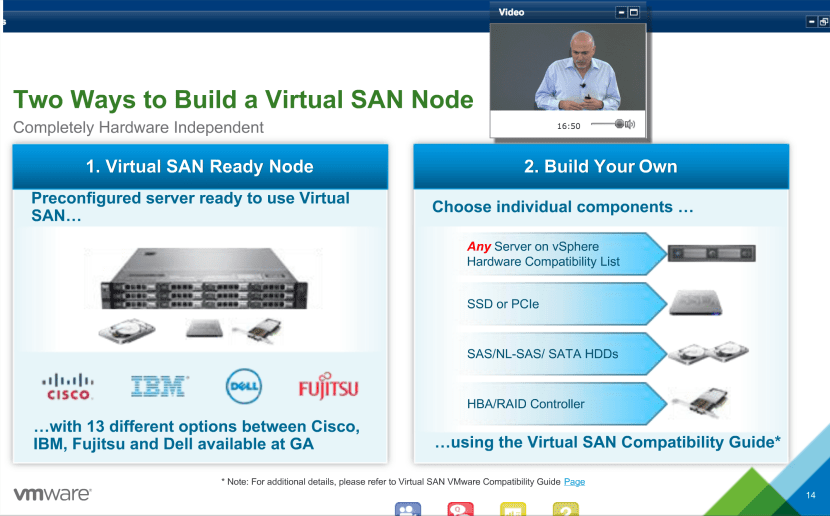

VMware announces VSAN to be released around March 10th.

Ben Fathi, the CTO of VMware announced the Virtual Storage Area Network (VSAN) feature in vSphere ESX on March 6, 2014.

Ben Fathi, the CTO of VMware announced the Virtual Storage Area Network (VSAN) feature in vSphere ESX on March 6, 2014.

VSAN is a storage technology that pools all local disks on multiple servers into one large distributed volume. Caching is done via an SSD drive.

Unfortunately, licensing and pricing details get released at VSAN General Availability around March 10th.

Out of the door, the VSAN will have the following features:

- Full support for VMware Horizon / View (no VSAN inside View — yet)

- Up to 32 nodes.

- Up to 2 million IOPS.

- 4.5 PB of space.

- 13 VSAN Ready Node configurations at launch using Cisco, IBM, Fujitsu or Dell servers.

- Build your own supported.

However, VSAN will also have the following requirements:

- At least 1 SSD drive.

- Up to 7 mechanical drives.

- Cannot use all SSDs or SAN storage.

- SSD must be at least 10% of space.

- Need ESXi 5.5 Update 1.

VSAN competitors:

- EMC’s ScaleIO — can build distributed storage on any OS out there (Windows, Linux plus VMware) and more nodes (per Duncan Epping).

- Nutanix — server, storage, VMware in a customized box.

- Simplivity — same concept as Nutanix.

- Pivot3 — same concept as Nutanix.

- Virtual Storage Appliance (VSA) solutions (VMware own VSA, Atlantis, HP Lefthand VSA, etc.).

- Regular storage arrays.

- Flash only storage arrays (XtremIO, EMC VNX-F, Cisco’s Whiptail/Invicta)

Analysis:

There is a lot of interest in VSAN. The beta had more than 10,000 people sign up. Some VMware partners around the country are preparing solutions already, ready to sell to eager customers.

However, everything depends on how it’s licensed and priced. The price has to be lower than traditional storage and even VSA solutions (except maybe VMware’s VSA). Only then it will make sense for the smaller customer.

Otherwise, especially for lower end Virtual Desktop Infrastructure (VDI), the VSAN is perfect — easy to set up (one checkbox), minimum of only 3 servers, provides enough IOPS with SSD caching. We are planning to use it for VDI.

Twitter Chat about HP blades, VMware, Virtual Connect & Storage

#HPtrick

hashtag to chat with me on Twitter:

June 18, 2013 — Tuesday, 2-3 pm Eastern Standard Time (11 am – 12 pm Pacific Standard Time).

The chat will be about HP blades, VMware, Virtual Connect & HP Storage, to answer new and remaining questions for my session at HP Discover 2013 “TB2603 – Building VMware vSphere 5.1 with blades, Virtual Connect and EVA.”

How to Twitter Chat:

Go to Twitter.com or the Twitter mobile app and search using the universal search field on top for #HPtrick

Then, tweet a question and make sure to include the #HPtrick expression (hashtag) in the question.

I will be monitoring #HPtrick area for questions, and will respond with an answer, also including the #HPtrick hashtag.

You will be able to Refresh your browser page or mobile app, and will see the answer to your question.

See you there!

Collateral for my session at the HP Discover 2013 conference

Thank you to those who attended my session and filled out the survey! I hope to be back next year.

My presentation is called “TB2603 – Building VMware vSphere 5.1 with blades, Virtual Connect and EVA.”

The session will discuss how VMware ESXi 5.1 (vSphere) can be implemented on HP c-Class blades, Virtual Connect and EVA, as well as the Flex-10/10D module and WS460c Gen8 blade with eight GPUs. The presentation will focus on real-world VMware and HP best practices, including how to load balance storage area network paths, how to make Virtual Connect really “connect” to Cisco Internet protocol switches, how to configure virtual local area networks for the Virtual Connect modules and VMware virtual switches and how to solve firmware and driver headaches with Virtual Connect and ESXi 5.1. Attendees will also receive tips on design decisions. A basic understanding of VMware, c-Class blades and Virtual Connect is recommended.

Here are the collateral files for the session:

Slides:

HP Documents:

DISCOVER_2013_HOL2653_Student_LAB_Guide_Rev.1.1

Use #HPtrick hashtag to chat with me on Twitter:

June 18, 2013 — Tuesday, 2-3 pm Eastern Standard Time (11 am – 12 pm Pacific Standard Time).

Speaking at the HP Discover 2013 conference

This is my 5th year to have the honor of speaking at the HP Discover conference.

Thank you to past attendees who rate my session high, and to HP staff who pick my session.

Session time:

Wednesday, June 12, 2013

4:30 PM – 5:30 PM Pacific Standard Time (7:30 PM – 8:30 PM Eastern Standard Time).

My presentation is called “TB2603 – Building VMware vSphere 5.1 with blades, Virtual Connect and EVA.”

The session will discuss how VMware ESXi 5.1 (vSphere) can be implemented on HP c-Class blades, Virtual Connect and EVA, as well as the Flex-10/10D module and WS460c Gen8 blade with eight GPUs. The presentation will focus on real-world VMware and HP best practices, including how to load balance storage area network paths, how to make Virtual Connect really “connect” to Cisco Internet protocol switches, how to configure virtual local area networks for the Virtual Connect modules and VMware virtual switches and how to solve firmware and driver headaches with Virtual Connect and ESXi 5.1. Attendees will also receive tips on design decisions. A basic understanding of VMware, c-Class blades and Virtual Connect is recommended.

Here is the official HP link to the session:

https://h30496.www3.hp.com/connect/sessionDetail.ww?SESSION_ID=2603&tclass=popup

You will be able to download the session slides starting the evening of June 12 on this blog.

This year, I decided to do some Social Media experiments.

So, please live Tweet points you find interesting during the session, using the following hashtag:

#HPtrick

Look for suggested tricks in the slides.

In addition, use #HPtrick hashtag to chat with me on Twitter:

June 18, 2013 — Tuesday, 2-3 pm Eastern Standard Time (11 am – 12 pm Pacific Standard Time).

If you would like to attend the HP conference in person, please register:

https://h30496.www3.hp.com/portal/newreg.ww

Then, choose session TB2603 in the Session Scheduler: